Attention

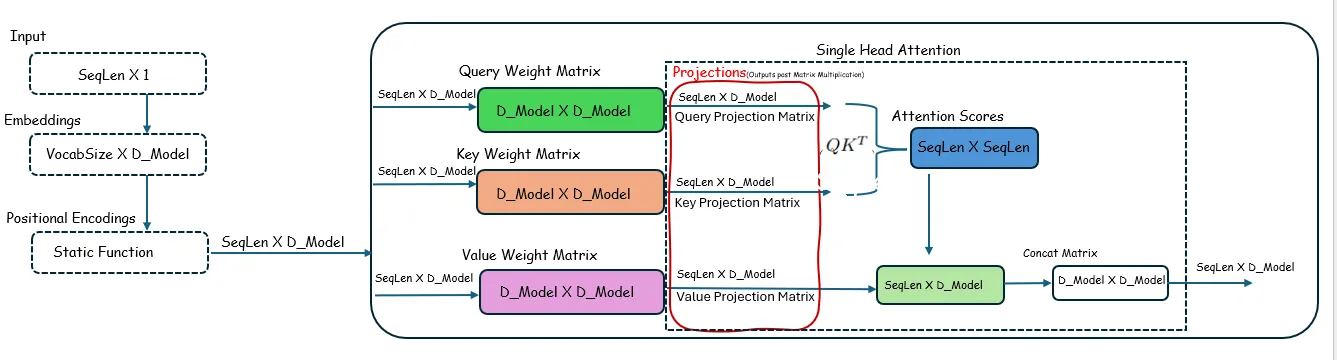

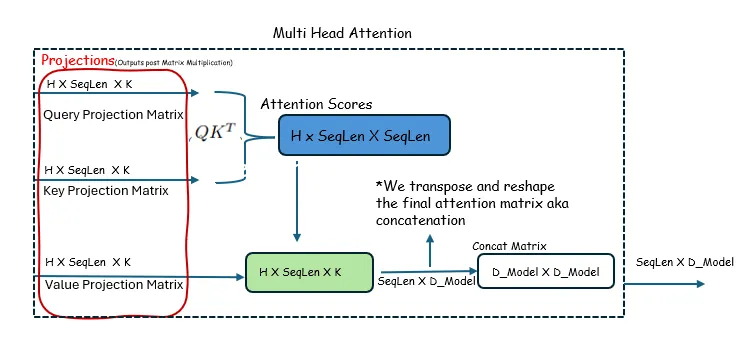

Self-Attention Mechanism

Visual walkthrough of single-headed and multi-headed self-attention — understanding the matrix dimensions and operations.

Single Headed Attention

- Ignore the Softmax operation and normalize by dividing by the square root of

d_model, because these operations do not affect the dimensions of the matrices involved.

Multi-Headed Attention

- Ignore the Softmax operation and normalize by dividing by the square root of

d_model, because these operations do not affect the dimensions of the matrices involved.